NHITS specializes its partial outputs in the different frequencies of

the time series through hierarchical interpolation and multi-rate input

processing.

In this notebook we show how to use NHITS on the

ETTm2 benchmark dataset. This

data set includes data points for 2 Electricity Transformers at 2

stations, including load, oil temperature.

We will show you how to load data, train, and perform automatic

hyperparameter tuning, to achieve SoTA performance, outperforming

even the latest Transformer architectures for a fraction of their

computational cost (50x faster).

You can run these experiments using GPU with Google Colab.

1. Installing NeuralForecast

2. Load ETTm2 Data

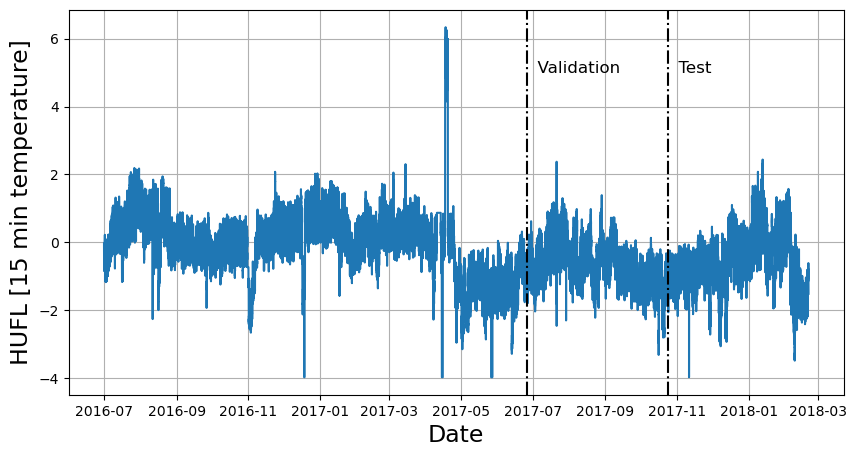

TheLongHorizon class will automatically download the complete ETTm2

dataset and process it.

It return three Dataframes: Y_df contains the values for the target

variables, X_df contains exogenous calendar features and S_df

contains static features for each time-series (none for ETTm2). For this

example we will only use Y_df.

If you want to use your own data just replace Y_df. Be sure to use a

long format and have a similar structure to our data set.

| unique_id | ds | y | |

|---|---|---|---|

| 0 | HUFL | 2016-07-01 00:00:00 | -0.041413 |

| 1 | HUFL | 2016-07-01 00:15:00 | -0.185467 |

| 57600 | HULL | 2016-07-01 00:00:00 | 0.040104 |

| 57601 | HULL | 2016-07-01 00:15:00 | -0.214450 |

| 115200 | LUFL | 2016-07-01 00:00:00 | 0.695804 |

| 115201 | LUFL | 2016-07-01 00:15:00 | 0.434685 |

| 172800 | LULL | 2016-07-01 00:00:00 | 0.434430 |

| 172801 | LULL | 2016-07-01 00:15:00 | 0.428168 |

| 230400 | MUFL | 2016-07-01 00:00:00 | -0.599211 |

| 230401 | MUFL | 2016-07-01 00:15:00 | -0.658068 |

| 288000 | MULL | 2016-07-01 00:00:00 | -0.393536 |

| 288001 | MULL | 2016-07-01 00:15:00 | -0.659338 |

| 345600 | OT | 2016-07-01 00:00:00 | 1.018032 |

| 345601 | OT | 2016-07-01 00:15:00 | 0.980124 |

3. Hyperparameter selection and forecasting

TheAutoNHITS class will automatically perform hyperparamter tunning

using Tune library,

exploring a user-defined or default search space. Models are selected

based on the error on a validation set and the best model is then stored

and used during inference.

The AutoNHITS.default_config attribute contains a suggested

hyperparameter space. Here, we specify a different search space

following the paper’s hyperparameters. Notice that 1000 Stochastic

Gradient Steps are enough to achieve SoTA performance. Feel free to

play around with this space.

Tip Refer to https://docs.ray.io/en/latest/tune/index.html for more information on the different space options, such as lists and continous intervals.mTo instantiate

AutoNHITS you need to define:

h: forecasting horizonloss: training loss. Use theDistributionLossto produce probabilistic forecasts.config: hyperparameter search space. IfNone, theAutoNHITSclass will use a pre-defined suggested hyperparameter space.num_samples: number of configurations explored.

NeuralForecast object with the

following required parameters:

-

models: a list of models. -

freq: a string indicating the frequency of the data. (See panda’s available frequencies.)

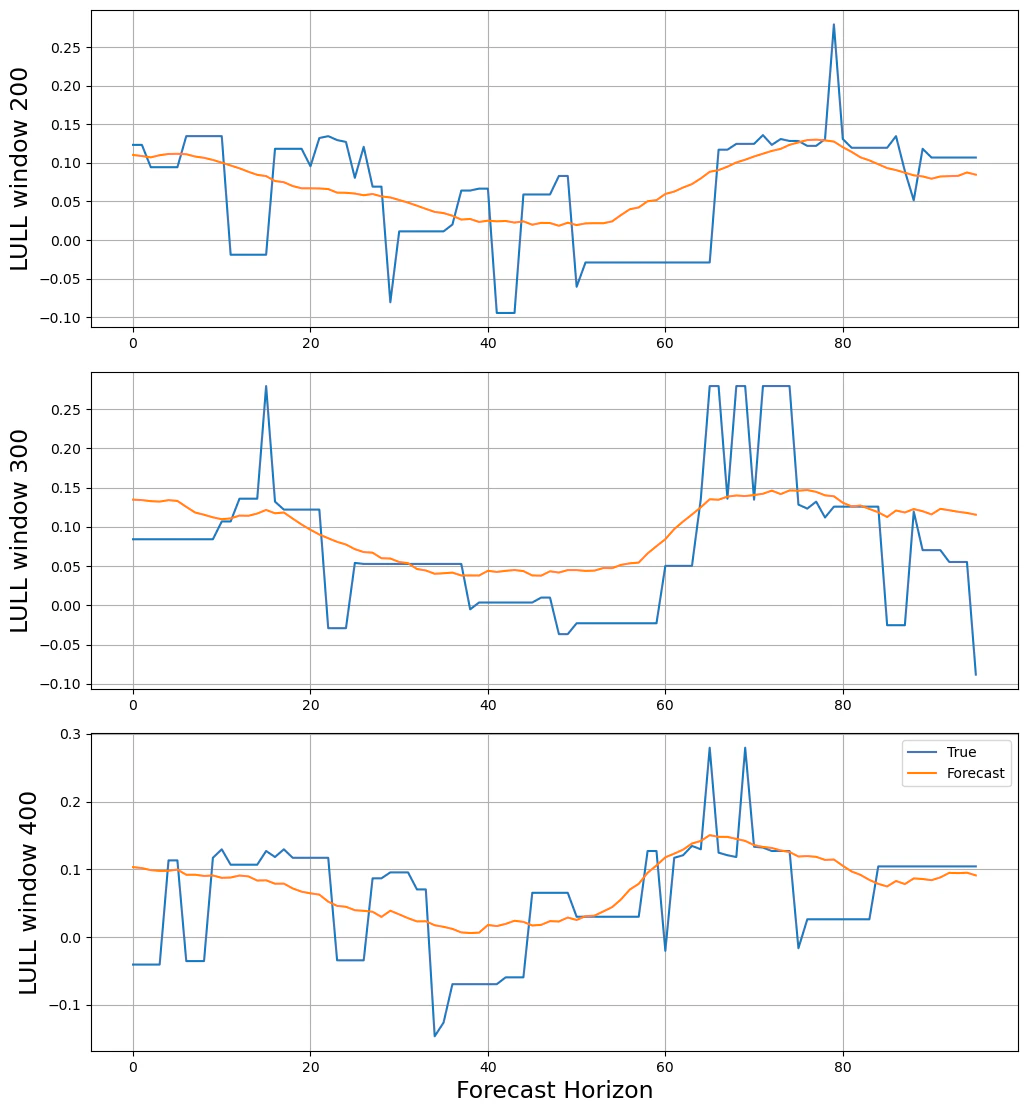

cross_validation method allows you to simulate multiple historic

forecasts, greatly simplifying pipelines by replacing for loops with

fit and predict methods.

With time series data, cross validation is done by defining a sliding

window across the historical data and predicting the period following

it. This form of cross validation allows us to arrive at a better

estimation of our model’s predictive abilities across a wider range of

temporal instances while also keeping the data in the training set

contiguous as is required by our models.

The cross_validation method will use the validation set for

hyperparameter selection, and will then produce the forecasts for the

test set.

4. Evaluate Results

TheAutoNHITS class contains a results tune attribute that stores

information of each configuration explored. It contains the validation

loss and best validation hyperparameter.

hyperopt_max_evals=30 in

Hyperparameter Tuning.

Mean Absolute Error (MAE):

| Horizon | NHITS | AutoFormer | InFormer | ARIMA |

|---|---|---|---|---|

| 96 | 0.249 | 0.339 | 0.453 | 0.301 |

| 192 | 0.305 | 0.340 | 0.563 | 0.345 |

| 336 | 0.346 | 0.372 | 0.887 | 0.386 |

| 720 | 0.426 | 0.419 | 1.388 | 0.445 |

| Horizon | NHITS | AutoFormer | InFormer | ARIMA |

|---|---|---|---|---|

| 96 | 0.173 | 0.255 | 0.365 | 0.225 |

| 192 | 0.245 | 0.281 | 0.533 | 0.298 |

| 336 | 0.295 | 0.339 | 1.363 | 0.370 |

| 720 | 0.401 | 0.422 | 3.379 | 0.478 |