Step-by-step guide on using theIn this walkthrough, we will become familiar with the mainARIMA ModelwithStatsforecast.

StatsForecast class and some relevant methods such as

StatsForecast.plot, StatsForecast.forecast and

StatsForecast.cross_validation.

The text in this article is largely taken from Rob J. Hyndman and

George Athanasopoulos (2018). “Forecasting Principles and Practice (3rd

ed)”.

Table of Contents

- Introduction

- ARIMA Models

- The meaning of p, d and q in ARIMA model

- AR and MA models

- ARIMA model

- How to find the order of differencing (d) in ARIMA model

- Loading libraries and data

- Explore data with the plot method

- How to find the order of the AR term (p)

- How to find the order of the MA term (q)

- How to handle if a time series is slightly under or over differenced

- Implementation of ARIMA with StatsForecast

- Cross-validation

- Model evaluation

- References

Introduction

- A Time Series is defined as a series of data points recorded at different time intervals. The time order can be daily, monthly, or even yearly.

- Time Series forecasting is the process of using a statistical model to predict future values of a time series based on past results.

- We have discussed various aspects of Time Series Forecasting in the previous notebook.

- Forecasting is the step where we want to predict the future values the series is going to take. Forecasting a time series is often of tremendous commercial value.

- If we use only the previous values of the time series to predict its future values, it is called Univariate Time Series Forecasting.

- If we use predictors other than the series (like exogenous variables) to forecast it is called Multi Variate Time Series Forecasting.

- This notebook focuses on a particular type of forecasting method called ARIMA modeling.

Introduction to ARIMA Models

- ARIMA stands for Autoregressive Integrated Moving Average Model. It belongs to a class of models that explains a given time series based on its own past values -i.e.- its own lags and the lagged forecast errors. The equation can be used to forecast future values. Any ‘non-seasonal’ time series that exhibits patterns and is not a random white noise can be modeled with ARIMA models.

- So, ARIMA, short for AutoRegressive Integrated Moving Average, is a forecasting algorithm based on the idea that the information in the past values of the time series can alone be used to predict the future values.

-

ARIMA Models are specified by three order parameters: (p, d, q),

where,

- p is the order of the AR term

- q is the order of the MA term

- d is the number of differencing required to make the time series stationary

- AR(p) Autoregression - a regression model that utilizes the dependent relationship between a current observation and observations over a previous period. An auto regressive (AR(p)) component refers to the use of past values in the regression equation for the time series.

- I(d) Integration - uses differencing of observations (subtracting an observation from observation at the previous time step) in order to make the time series stationary. Differencing involves the subtraction of the current values of a series with its previous values d number of times.

- MA(q) Moving Average - a model that uses the dependency between an observation and a residual error from a moving average model applied to lagged observations. A moving average component depicts the error of the model as a combination of previous error terms. The order q represents the number of terms to be included in the model.

Types of ARIMA Model

- ARIMA : Non-seasonal Autoregressive Integrated Moving Averages

- SARIMA : Seasonal ARIMA

- SARIMAX : Seasonal ARIMA with exogenous variables

The meaning of p, d and q in ARIMA model

The meaning of p

pis the order of the Auto Regressive (AR) term. It refers to the number of lags of Y to be used as predictors.

The meaning of d

- The term Auto Regressive’ in ARIMA means it is a linear regression model that uses its own lags as predictors. Linear regression models, as we know, work best when the predictors are not correlated and are independent of each other. So we need to make the time series stationary.

- The most common approach to make the series stationary is to difference it. That is, subtract the previous value from the current value. Sometimes, depending on the complexity of the series, more than one differencing may be needed.

- The value of d, therefore, is the minimum number of differencing needed to make the series stationary. If the time series is already stationary, then d = 0.

The meaning of q

- q is the order of the Moving Average (MA) term. It refers to the number of lagged forecast errors that should go into the ARIMA Model.

AR and MA models

AR model

In an autoregression model, we forecast the variable of interest using a linear combination of past values of the variable. The term autoregression indicates that it is a regression of the variable against itself. Thus, an autoregressive model of order p can be written as where is white noise. This is like a multiple regression but with lagged values of as predictors. We refer to this as an AR( p) model, an autoregressive model of order p.MA model

Rather than using past values of the forecast variable in a regression, a moving average model uses past forecast errors in a regression-like model, where is white noise. We refer to this as an MA(q) model, a moving average model of order q. Of course, we do not observe the values of, so it is not really a regression in the usual sense. Notice that each value of yt can be thought of as a weighted moving average of the past few forecast errors (although the coefficients will not normally sum to one). However, moving average models should not be confused with the moving average smoothing . A moving average model is used for forecasting future values, while moving average smoothing is used for estimating the trend-cycle of past values. Thus, we have discussed AR and MA Models respectively.

ARIMA model

If we combine differencing with autoregression and a moving average model, we obtain a non-seasonal ARIMA model. ARIMA is an acronym for AutoRegressive Integrated Moving Average (in this context, “integration” is the reverse of differencing). The full model can be written as where is the differenced series (it may have been differenced more than once). The “predictors” on the right hand side include both lagged values of and lagged errors. We call this an ARIMA(p,d,q) model, where| p | order of the autoregressive part |

| d | degree of first differencing involved |

| q | order of the moving average part |

| Model | p d q | Differenced | Method |

|---|---|---|---|

| Arima(0,0,0) | 0 0 0 | White noise | |

| ARIMA (0,1,0) | 0 1 0 | Random walk | |

| ARIMA (0,2,0) | 0 2 0 | Constant | |

| ARIMA (1,0,0) | 1 0 0 | AR(1): AR(1): First-order regression model | |

| ARIMA (2, 0, 0) | 2 0 0 | AR(2): Second-order regression model | |

| ARIMA (1, 1, 0) | 1 1 0 | Differenced first-order | |

| autoregressive model | |||

| ARIMA (0, 1, 1) | 0 1 1 | Simple exponential | |

| smoothing | |||

| ARIMA (0, 0, 1) | 0 0 1 | MA(1): First-order | |

| regression model | |||

| ARIMA (0, 0, 2) | 0 0 2 | MA(2): Second-order | |

| regression model | |||

| ARIMA (1, 0, 1) | 1 0 1 | ARMA model | |

| ARIMA (1, 1, 1) | 1 1 1 | ARIMA model | |

| ARIMA (1, 1, 2) | 1 1 2 | Damped-trend linear Exponential smoothing | |

| ARIMA (0, 2, 1) OR (0,2,2) | 0 2 1 | Linear exponential smoothing |

ARIMA model in words

Predicted Yt = Constant + Linear combination Lags of Y (upto p lags) + Linear Combination of Lagged forecast errors (upto q lags)How to find the order of differencing (d) in ARIMA model

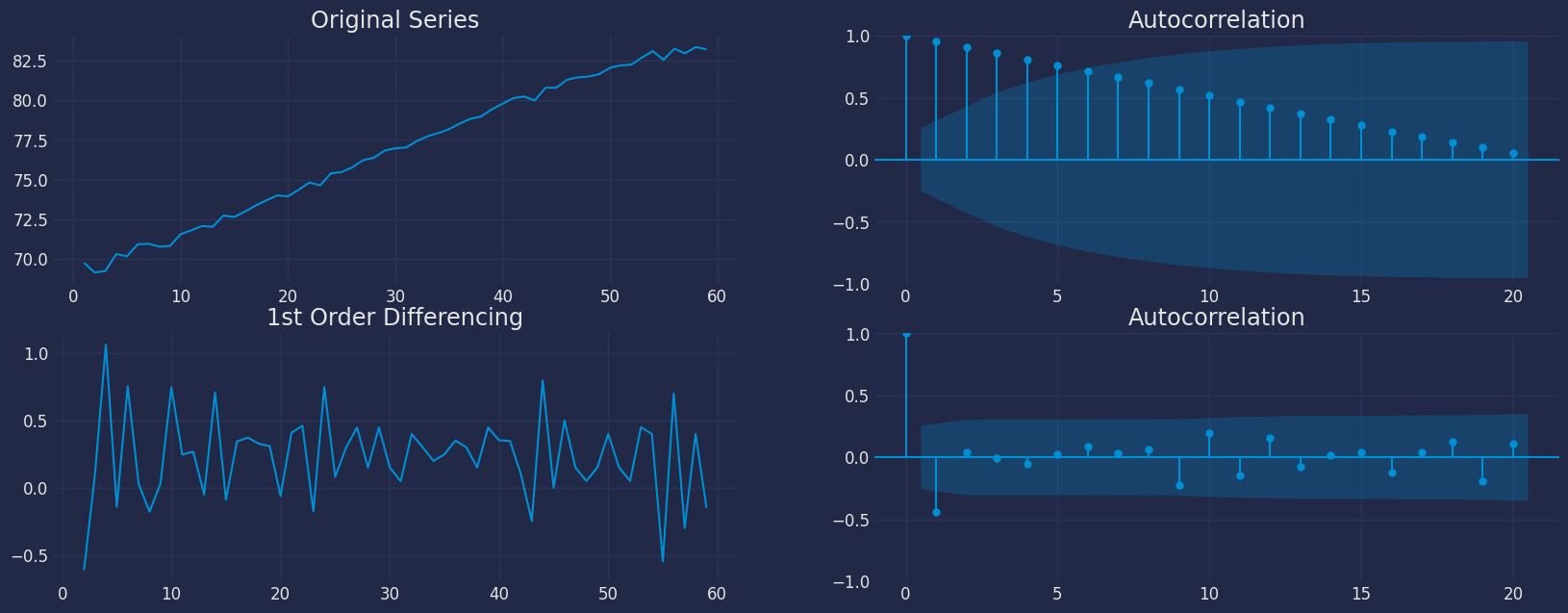

- As stated earlier, the purpose of differencing is to make the time series stationary. But we should be careful to not over-difference the series. An over differenced series may still be stationary, which in turn will affect the model parameters.

- So we should determine the right order of differencing. The right order of differencing is the minimum differencing required to get a near-stationary series which roams around a defined mean and the ACF plot reaches to zero fairly quick.

- If the autocorrelations are positive for many number of lags (10 or more), then the series needs further differencing. On the other hand, if the lag 1 autocorrelation itself is too negative, then the series is probably over-differenced.

- If we can’t really decide between two orders of differencing, then we go with the order that gives the least standard deviation in the differenced series.

-

Now, we will explain these concepts with the help of an example as

follows:

- First, I will check if the series is stationary using the Augmented Dickey Fuller test (ADF Test), from the statsmodels package. The reason being is that we need differencing only if the series is non-stationary. Else, no differencing is needed, that is, d=0.

- The null hypothesis (Ho) of the ADF test is that the time series is non-stationary. So, if the p-value of the test is less than the significance level (0.05) then we reject the null hypothesis and infer that the time series is indeed stationary.

- So, in our case, if P Value > 0.05 we go ahead with finding the order of differencing.

Loading libraries and data

Tip Statsforecast will be needed. To install, see instructions.Next, we import plotting libraries and configure the plotting style.

Read data

| year | value | |

|---|---|---|

| 0 | 1960-01-01 | 69.123902 |

| 1 | 1961-01-01 | 69.760244 |

| 2 | 1962-01-01 | 69.149756 |

| 3 | 1963-01-01 | 69.248049 |

| 4 | 1964-01-01 | 70.311707 |

-

The

unique_id(string, int or category) represents an identifier for the series. -

The

ds(datestamp) column should be of a format expected by Pandas, ideally YYYY-MM-DD for a date or YYYY-MM-DD HH:MM:SS for a timestamp. -

The

y(numeric) represents the measurement we wish to forecast.

| ds | y | unique_id | |

|---|---|---|---|

| 0 | 1960-01-01 | 69.123902 | 1 |

| 1 | 1961-01-01 | 69.760244 | 1 |

| 2 | 1962-01-01 | 69.149756 | 1 |

| 3 | 1963-01-01 | 69.248049 | 1 |

| 4 | 1964-01-01 | 70.311707 | 1 |

ds from the object type to datetime.

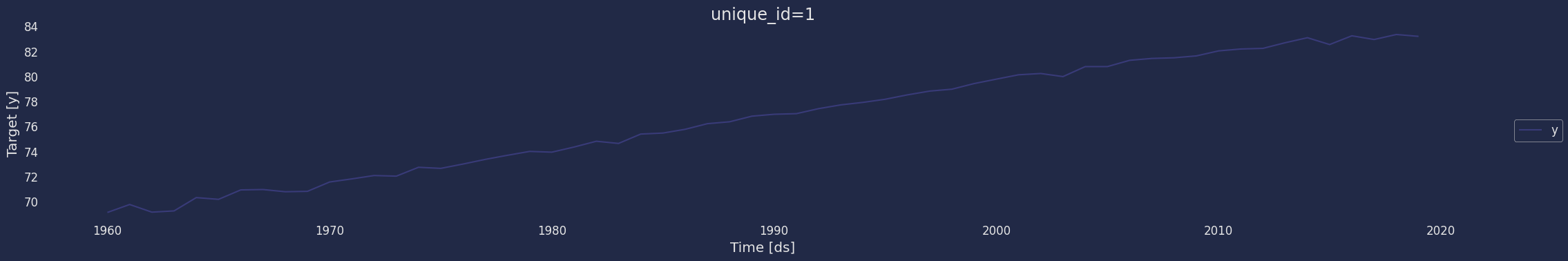

Explore data with the plot method

Plot a series using the plot method from the StatsForecast class. This method prints a random series from the dataset and is useful for basic EDA.

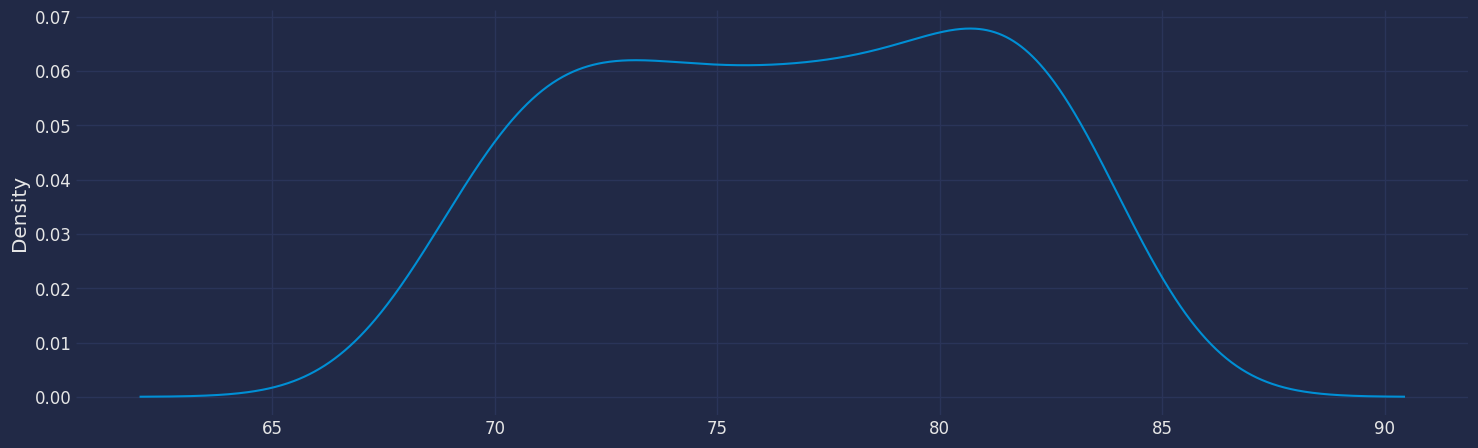

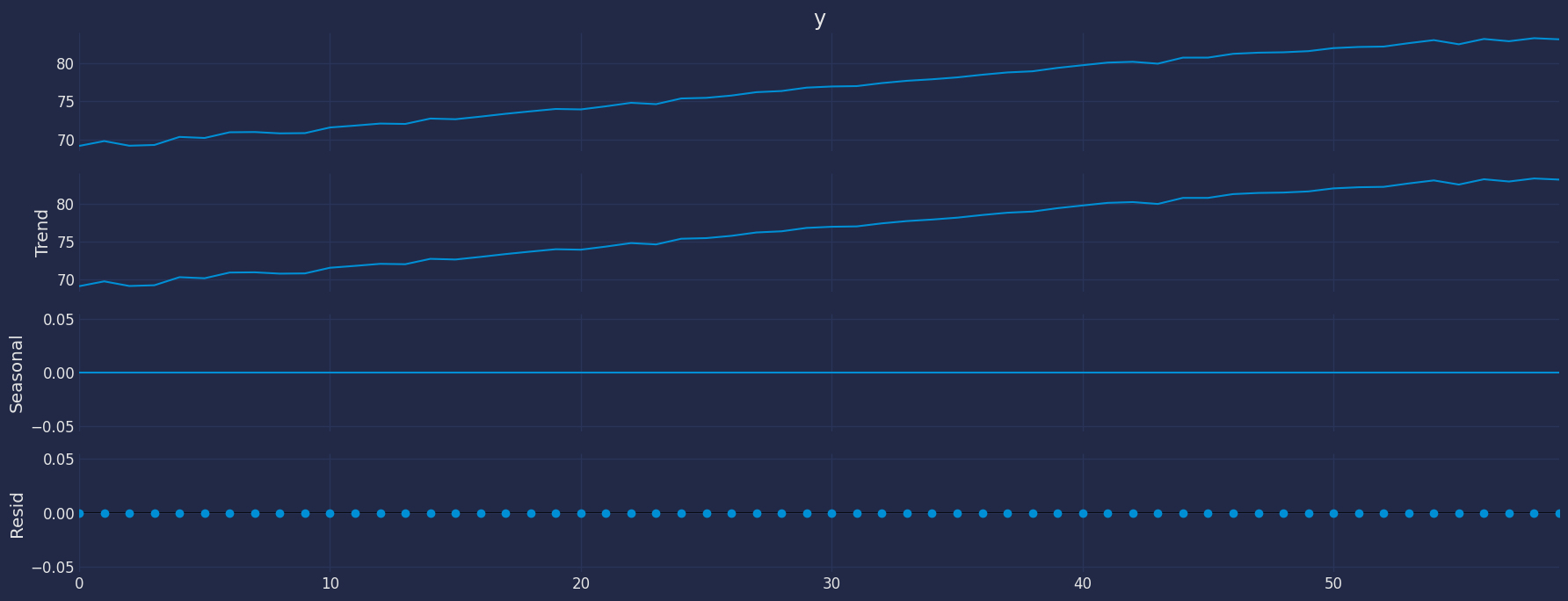

Seasonal Decomposed

How to decompose a time series and why? In time series analysis to forecast new values, it is very important to know past data. More formally, we can say that it is very important to know the patterns that values follow over time. There can be many reasons that cause our forecast values to fall in the wrong direction. Basically, a time series consists of four components. The variation of those components causes the change in the pattern of the time series. These components are:- Level: This is the primary value that averages over time.

- Trend: The trend is the value that causes increasing or decreasing patterns in a time series.

- Seasonality: This is a cyclical event that occurs in a time series for a short time and causes short-term increasing or decreasing patterns in a time series.

- Residual/Noise: These are the random variations in the time series.

Additive time series

If the components of the time series are added to make the time series. Then the time series is called the additive time series. By visualization, we can say that the time series is additive if the increasing or decreasing pattern of the time series is similar throughout the series. The mathematical function of any additive time series can be represented by:Multiplicative time series

If the components of the time series are multiplicative together, then the time series is called a multiplicative time series. For visualization, if the time series is having exponential growth or decline with time, then the time series can be considered as the multiplicative time series. The mathematical function of the multiplicative time series can be represented as.

The Augmented Dickey-Fuller Test

An Augmented Dickey-Fuller (ADF) test is a type of statistical test that determines whether a unit root is present in time series data. Unit roots can cause unpredictable results in time series analysis. A null hypothesis is formed in the unit root test to determine how strongly time series data is affected by a trend. By accepting the null hypothesis, we accept the evidence that the time series data is not stationary. By rejecting the null hypothesis or accepting the alternative hypothesis, we accept the evidence that the time series data is generated by a stationary process. This process is also known as stationary trend. The values of the ADF test statistic are negative. Lower ADF values indicate a stronger rejection of the null hypothesis. Augmented Dickey-Fuller Test is a common statistical test used to test whether a given time series is stationary or not. We can achieve this by defining the null and alternate hypothesis. Null Hypothesis: Time Series is non-stationary. It gives a time-dependent trend. Alternate Hypothesis: Time Series is stationary. In another term, the series doesn’t depend on time. ADF or t Statistic < critical values: Reject the null hypothesis, time series is stationary. ADF or t Statistic > critical values: Failed to reject the null hypothesis, time series is non-stationary.| ds | y | unique_id | y_diff | |

|---|---|---|---|---|

| 1 | 1961-01-01 | 69.760244 | 1 | 0.636341 |

| 2 | 1962-01-01 | 69.149756 | 1 | -0.610488 |

| 3 | 1963-01-01 | 69.248049 | 1 | 0.098293 |

| 4 | 1964-01-01 | 70.311707 | 1 | 1.063659 |

| 5 | 1965-01-01 | 70.171707 | 1 | -0.140000 |

- For the above data, we can see that the time series reaches stationarity with one orders of differencing.

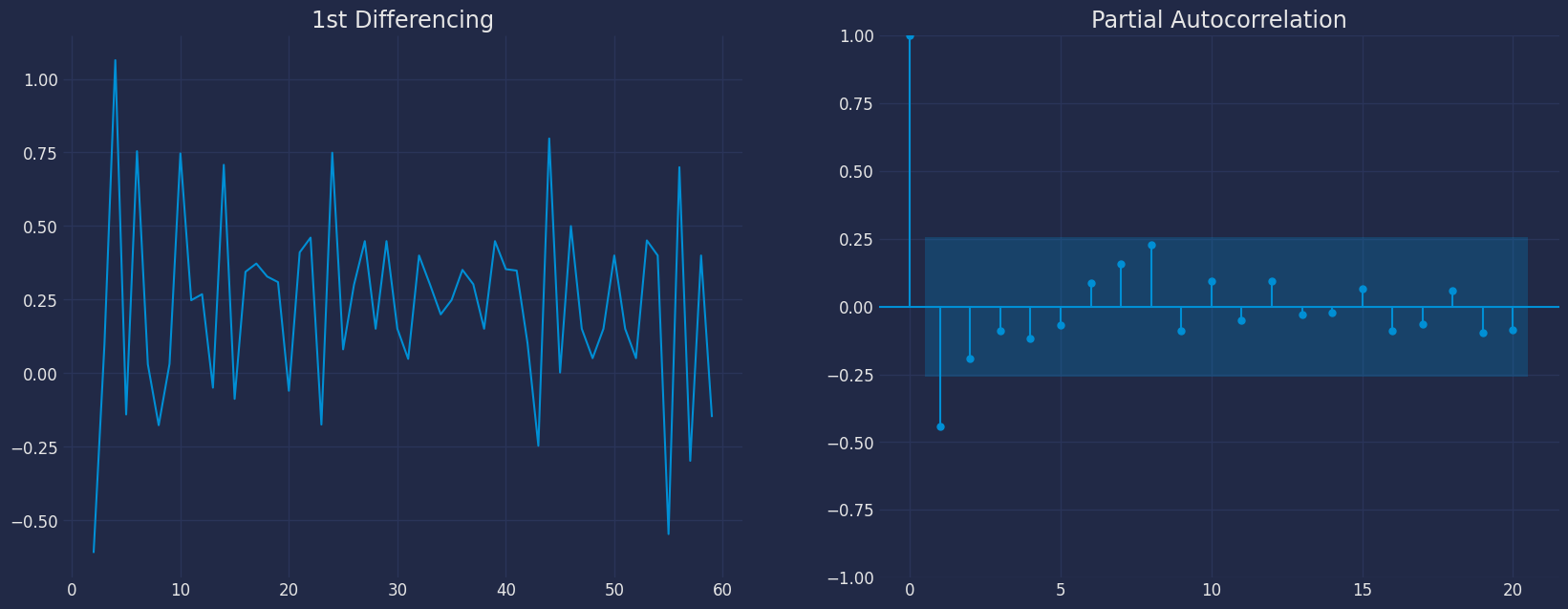

How to find the order of the AR term (p)

- The next step is to identify if the model needs any AR terms. We will find out the required number of AR terms by inspecting the Partial Autocorrelation (PACF) plot.

- Partial autocorrelation can be imagined as the correlation between the series and its lag, after excluding the contributions from the intermediate lags. So, PACF sort of conveys the pure correlation between a lag and the series. This way, we will know if that lag is needed in the AR term or not.

- Partial autocorrelation of lag (k) of a series is the coefficient of that lag in the autoregression equation of .

- That is, suppose, if is the current series and is the lag 1 of , then the partial autocorrelation of lag 3 is the coefficient of in the above equation.

- Now, we should find the number of AR terms. Any autocorrelation in a stationarized series can be rectified by adding enough AR terms. So, we initially take the order of AR term to be equal to as many lags that crosses the significance limit in the PACF plot.

- We can see that the PACF lag 1 is quite significant since it is well above the significance line. So, we will fix the value of p as 1.

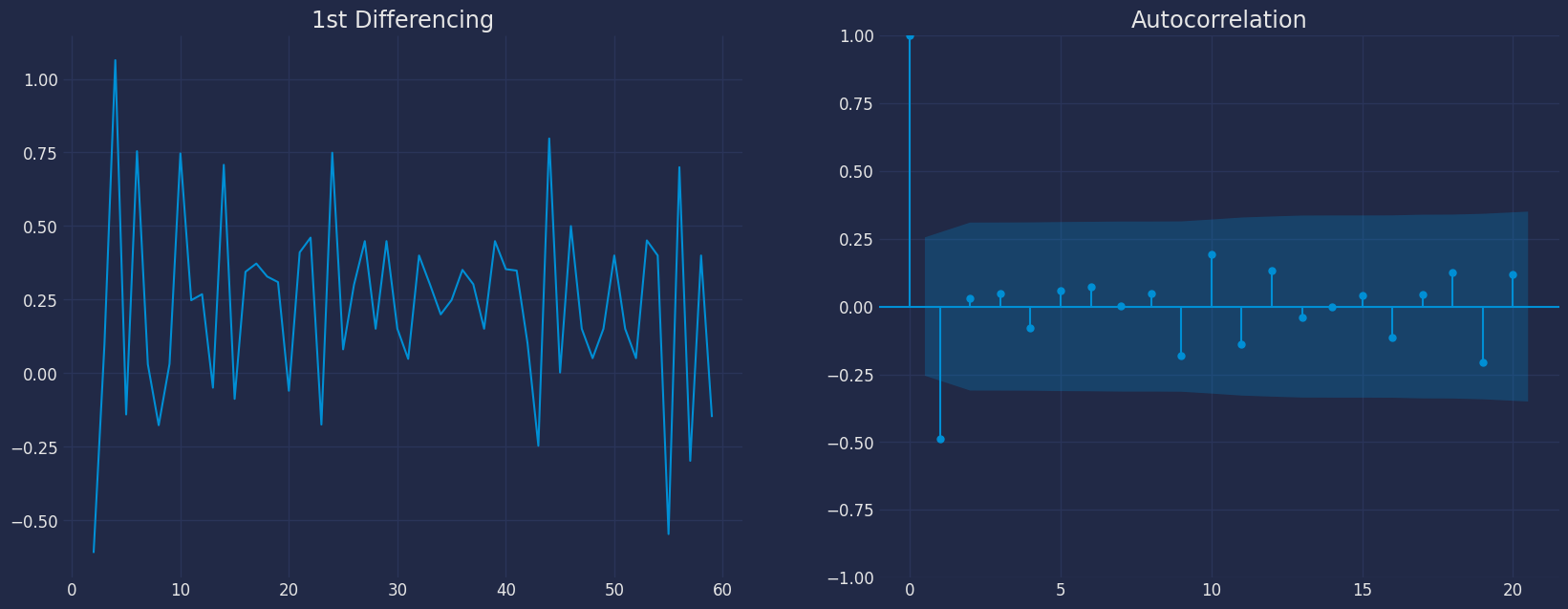

How to find the order of the MA term (q)

- Just like how we looked at the PACF plot for the number of AR terms, we will look at the ACF plot for the number of MA terms. An MA term is technically, the error of the lagged forecast.

- The ACF tells how many MA terms are required to remove any autocorrelation in the stationarized series.

- Let’s see the autocorrelation plot of the differenced series.

- We can see that couple of lags are well above the significance line. So, we will fix q as 1. If there is any doubt, we will go with the simpler model that sufficiently explains the Y.

How to handle if a time series is slightly under or over differenced

- It may happen that the time series is slightly under differenced. Differencing it one more time makes it slightly over-differenced.

- If the series is slightly under differenced, adding one or more additional AR terms usually makes it up. Likewise, if it is slightly over-differenced, we will try adding an additional MA term.

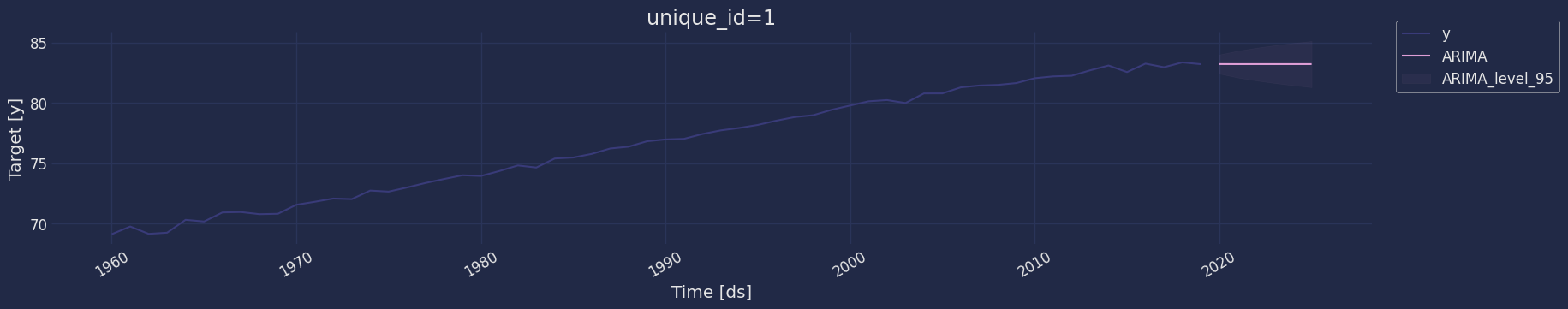

Implementation of ARIMA with StatsForecast

Now, we have determined the values of p, d and q. We have everything needed to fit the ARIMA model. We will use the ARIMA() implementation in thestatsforecast package.

The parameters found are: * For the autoregressive model, * for

the moving average model * and for the stationarity of the model

with a differential with an order

Therefore, the model that we are going to test is the ARIMA(1,1,1)

model.

Instantiate the model

Fit the Model

Making the predictions

| unique_id | ds | ARIMA | |

|---|---|---|---|

| 0 | 1 | 2020-01-01 | 83.206903 |

| 1 | 1 | 2021-01-01 | 83.203508 |

| 2 | 1 | 2022-01-01 | 83.204742 |

| 3 | 1 | 2023-01-01 | 83.204293 |

| 4 | 1 | 2024-01-01 | 83.204456 |

| 5 | 1 | 2025-01-01 | 83.204397 |

| unique_id | ds | ARIMA | ARIMA-lo-95 | ARIMA-hi-95 | |

|---|---|---|---|---|---|

| 0 | 1 | 2020-01-01 | 83.206903 | 82.412336 | 84.001469 |

| 1 | 1 | 2021-01-01 | 83.203508 | 82.094625 | 84.312391 |

| 2 | 1 | 2022-01-01 | 83.204742 | 81.848344 | 84.561139 |

| 3 | 1 | 2023-01-01 | 83.204293 | 81.640430 | 84.768156 |

| 4 | 1 | 2024-01-01 | 83.204456 | 81.457145 | 84.951767 |

| 5 | 1 | 2025-01-01 | 83.204397 | 81.291297 | 85.117497 |

forecast method

Memory efficient predictions. This method avoids memory burden due from object storage. It is analogous to fit_predict without storing information. It assumes you know the forecast horizon in advance.| unique_id | ds | ARIMA | ARIMA-lo-95 | ARIMA-hi-95 | |

|---|---|---|---|---|---|

| 0 | 1 | 2020-01-01 | 83.206903 | 82.412336 | 84.001469 |

| 1 | 1 | 2021-01-01 | 83.203508 | 82.094625 | 84.312391 |

| 2 | 1 | 2022-01-01 | 83.204742 | 81.848344 | 84.561139 |

| 3 | 1 | 2023-01-01 | 83.204293 | 81.640430 | 84.768156 |

| 4 | 1 | 2024-01-01 | 83.204456 | 81.457145 | 84.951767 |

| 5 | 1 | 2025-01-01 | 83.204397 | 81.291297 | 85.117497 |

Model Evaluation

The commonly used accuracy metrics to judge forecasts are:- Mean Absolute Percentage Error (MAPE)

- Mean Error (ME)

- Mean Absolute Error (MAE)

- Mean Percentage Error (MPE)

- Root Mean Squared Error (RMSE)

- Correlation between the Actual and the Forecast (corr)

| unique_id | metric | ARIMA | |

|---|---|---|---|

| 0 | 1 | mse | 0.184000 |

| 1 | 1 | mae | 0.397932 |

| 2 | 1 | rmse | 0.428952 |

| 3 | 1 | mape | 0.004785 |